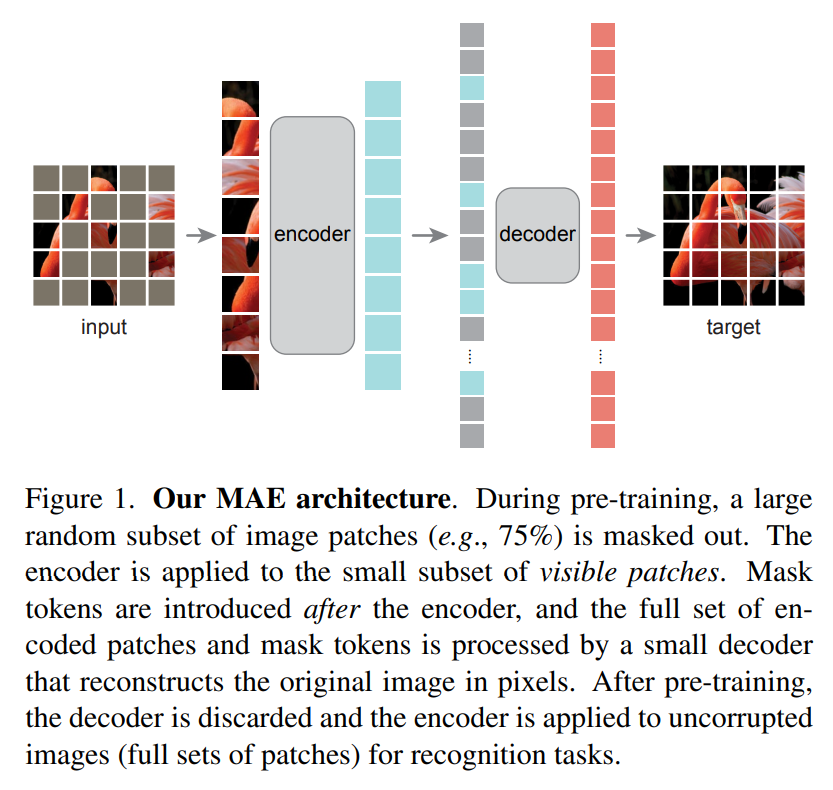

Building a Masked Autoencoder (MAE) from Scratch in PyTorch

Learn how masked autoencoder (MAE) works and implemented in PyTorch

Learn how masked autoencoder (MAE) works and implemented in PyTorch

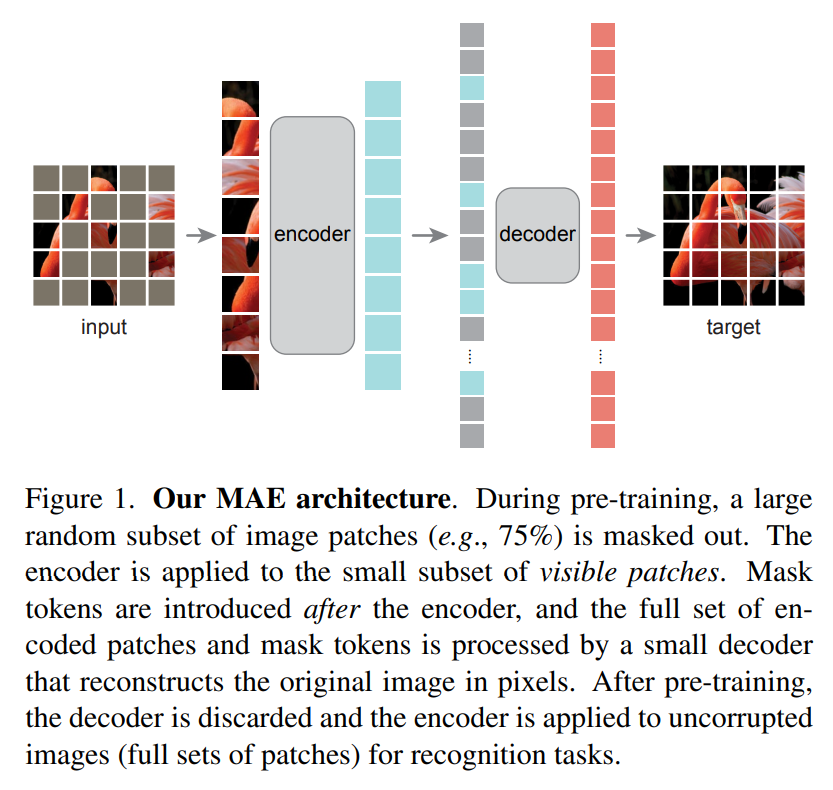

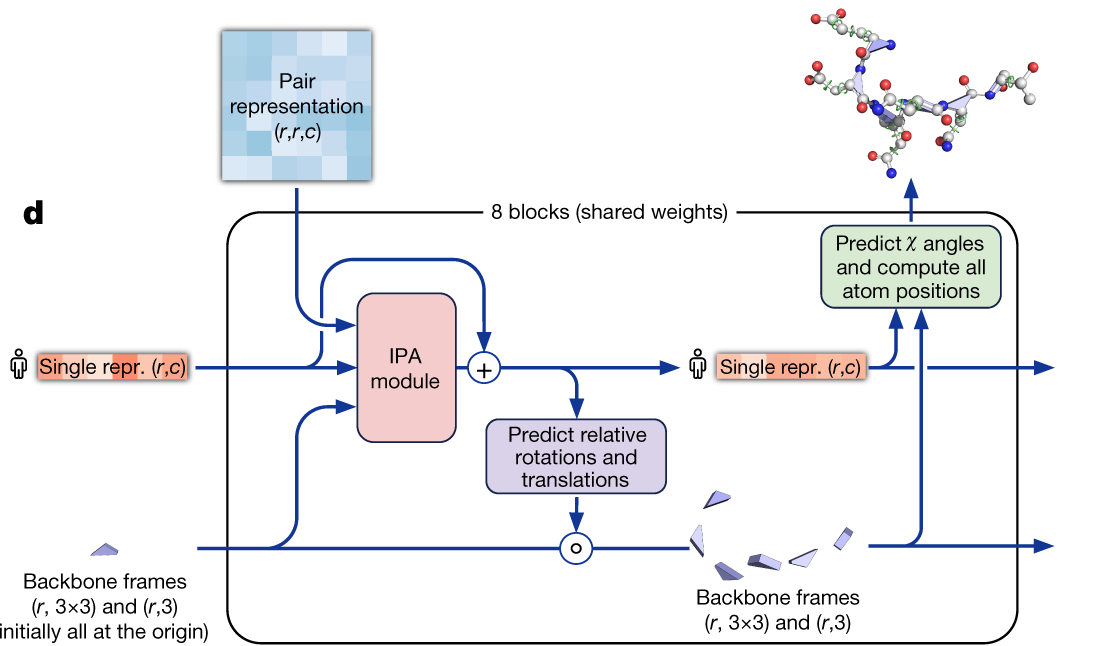

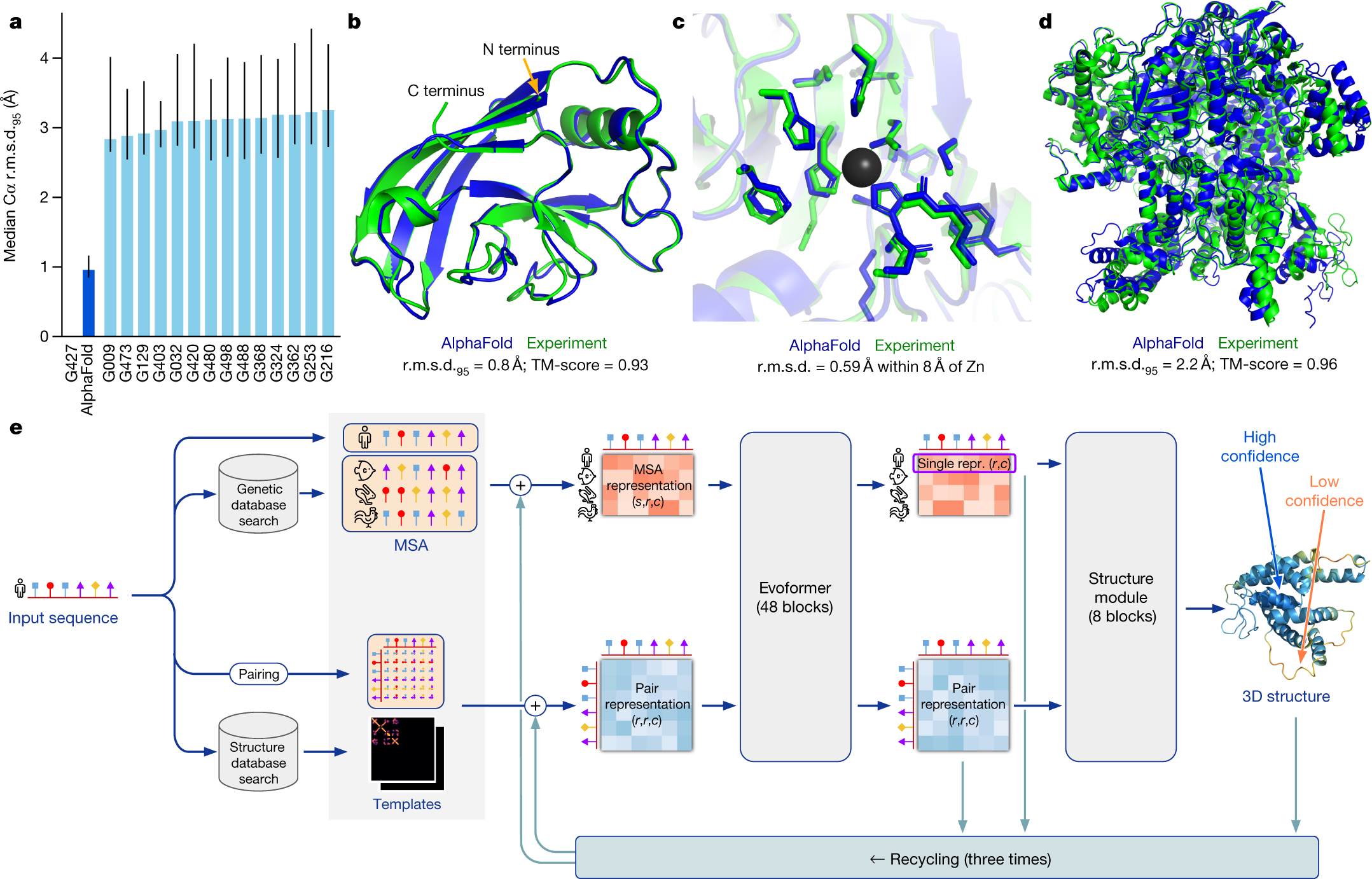

A detailed mathematical breakdown of AlphaFold2’s Evoformer block, explaining each operation with concrete matrix algebra and dimensions - Subpart 2/3

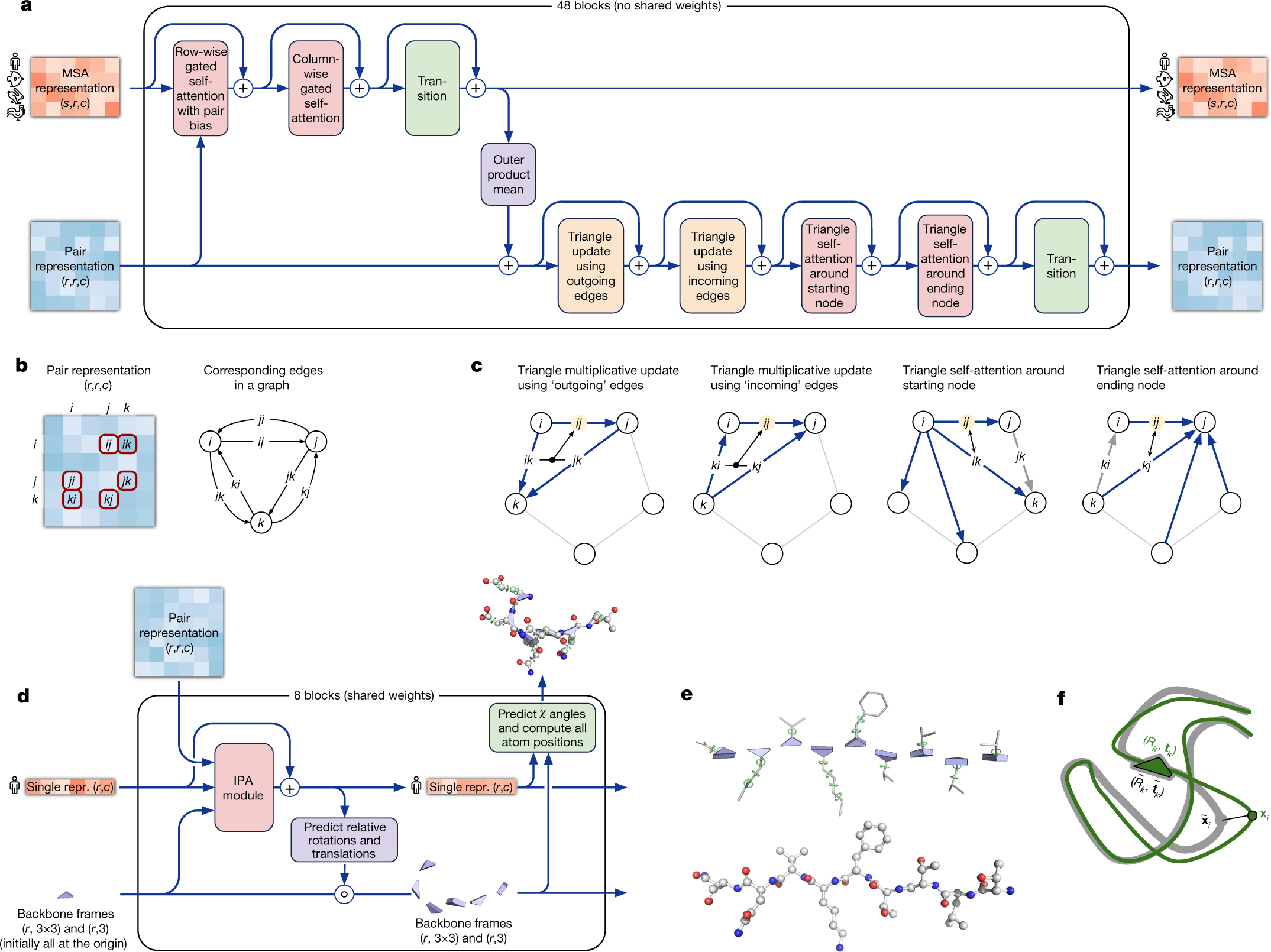

AlphaFold2’s Structure Module uses Invariant Point Attention to convert abstract Evoformer predictions into 3D atomic coordinates through iterative refinement, while multi-objective loss functions guide training - Subpart 3/3

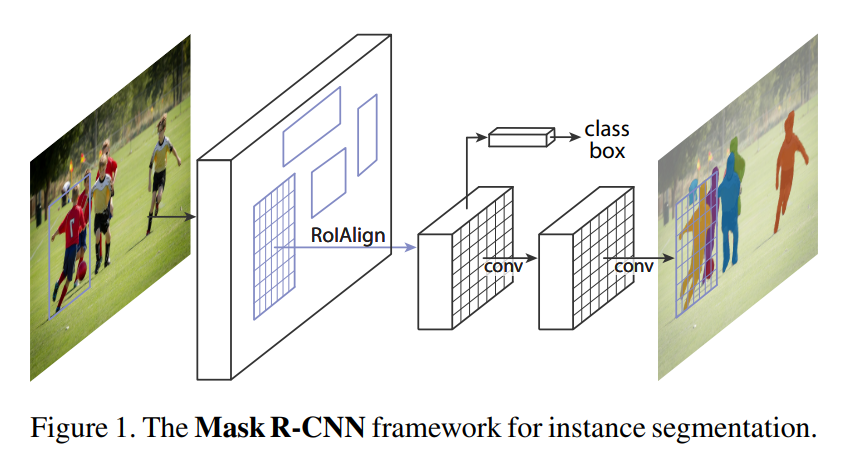

Mask R-CNN elegantly extends Faster R-CNN by adding a mask prediction branch, achieving state-of-the-art instance segmentation through simple yet effective architectural choices.

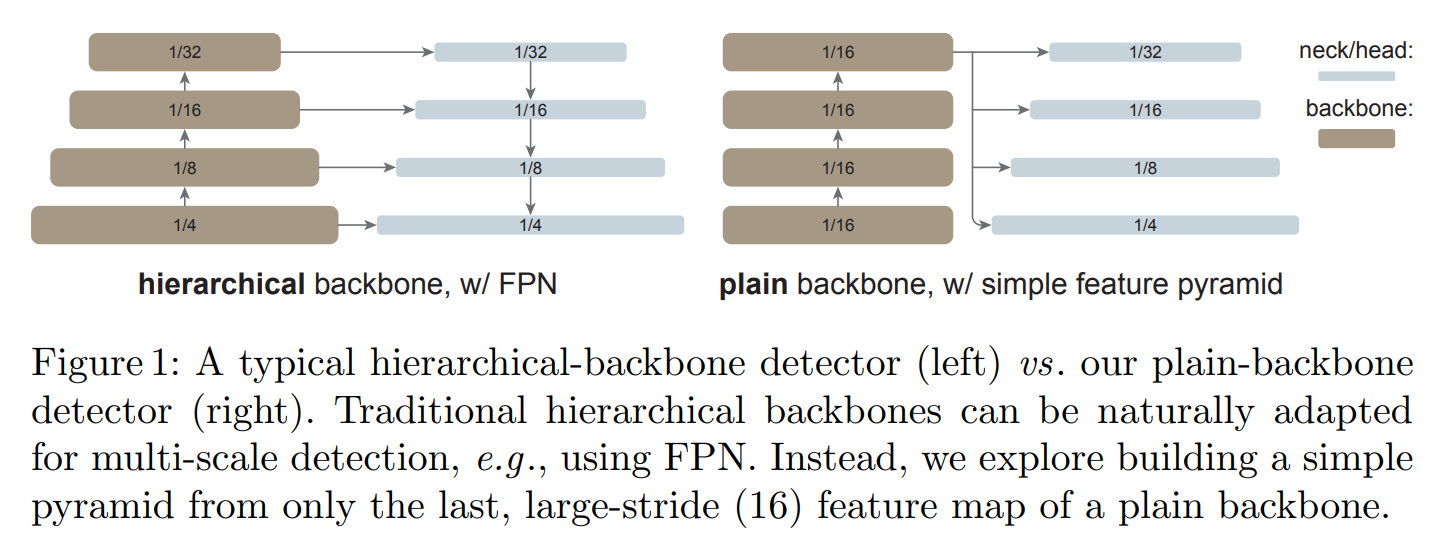

ViTDet demonstrates that plain, non-hierarchical Vision Transformers can compete with hierarchical backbones for object detection through simple adaptations.

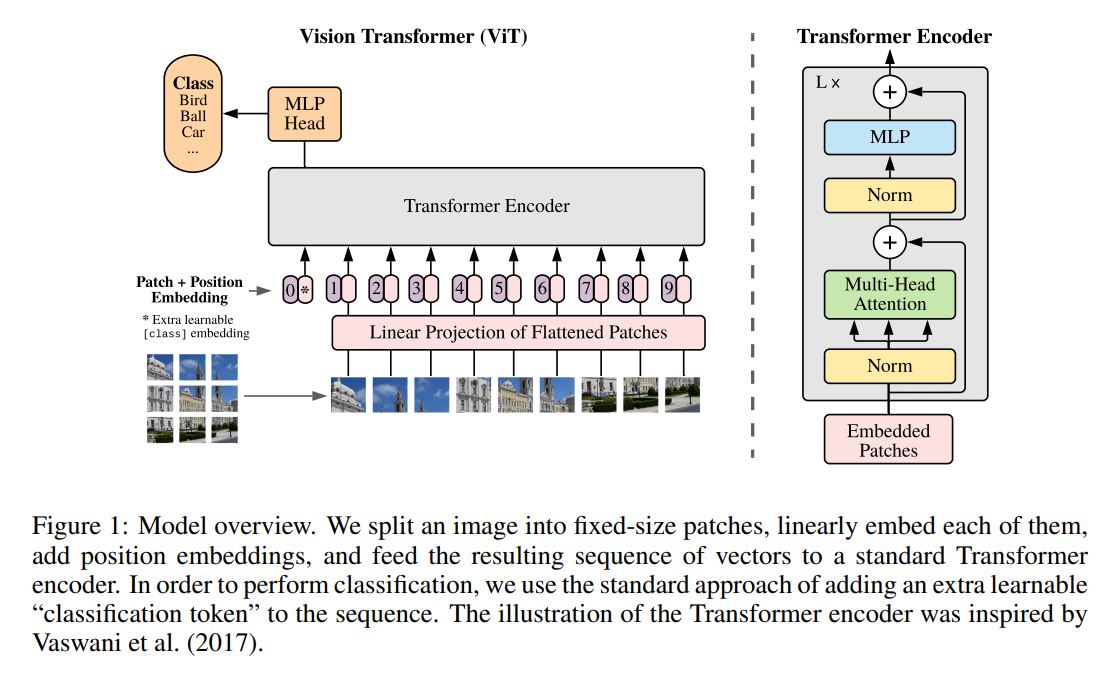

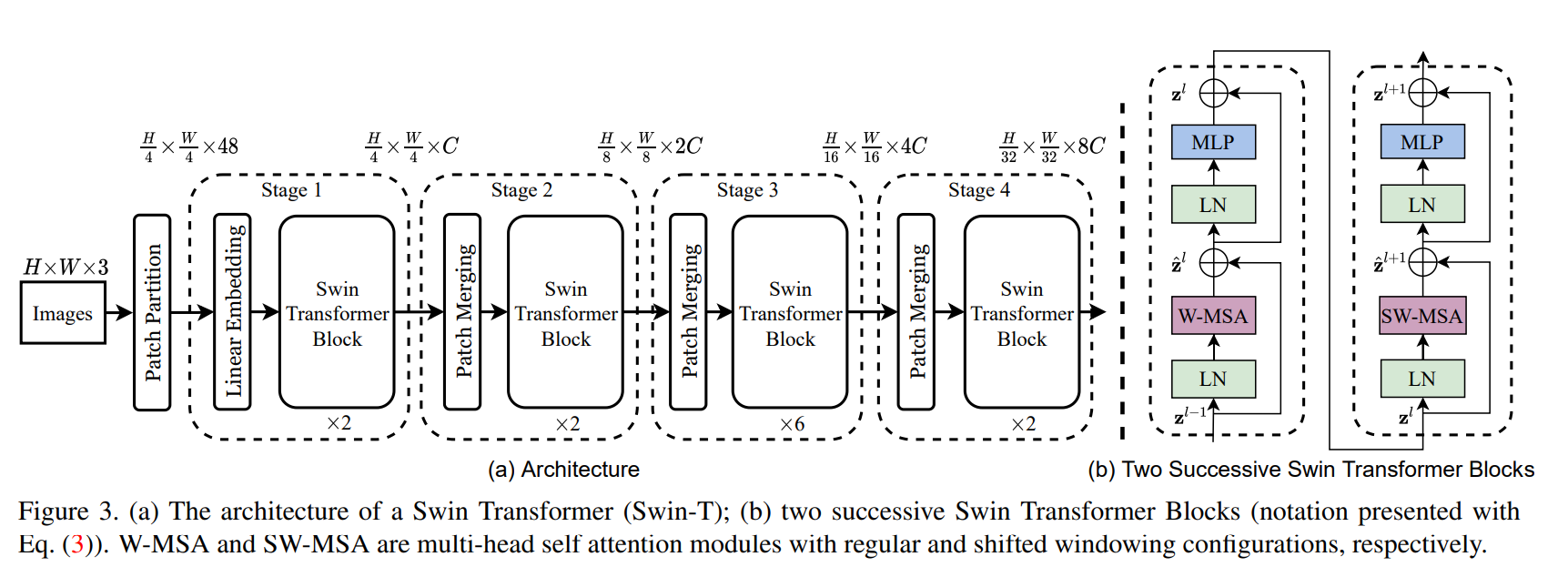

An in-depth look at ‘An Image is Worth 16x16 Words,’ the paper that introduced the pure Vision Transformer, its architecture, novelty, limitations, and how modern models like Swin Transformer evolved from it.

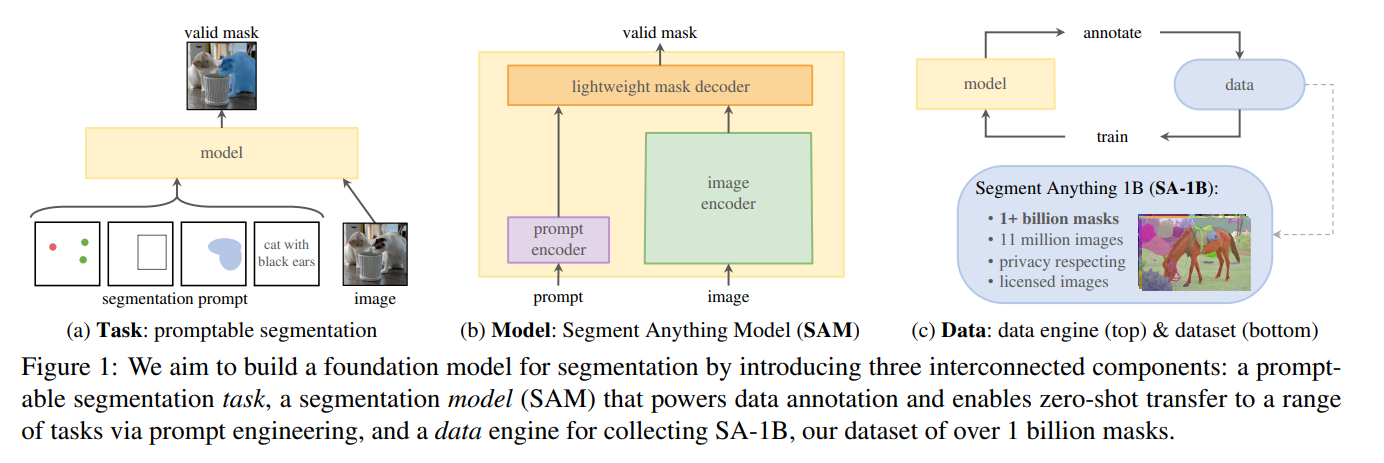

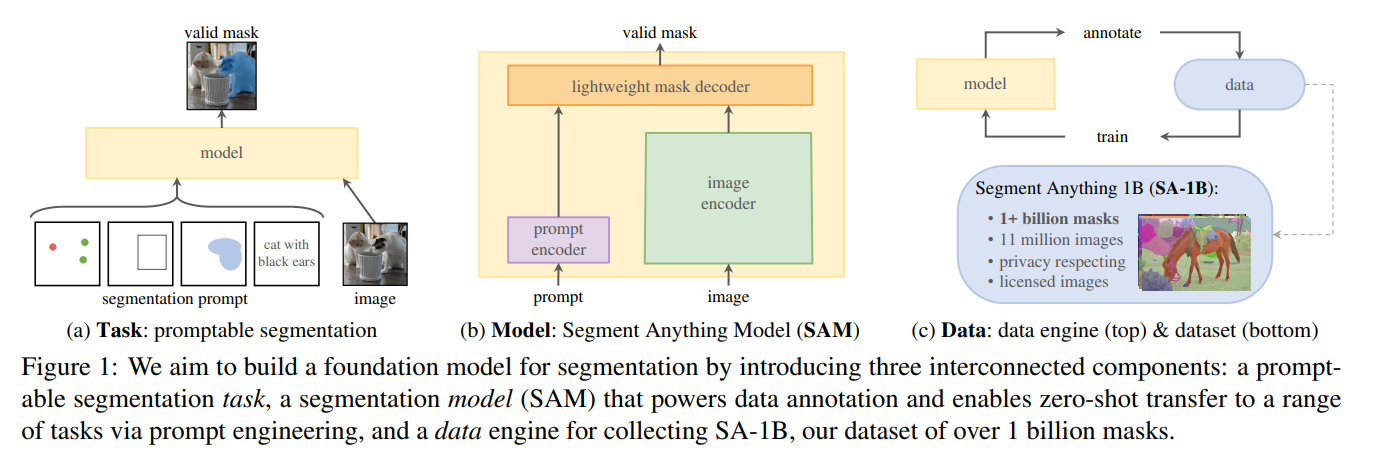

Meta’s Segment Anything Model (SAM 1) delivers a wide variety of predictsion, detections, and segmentations with a remarkable accuracy. Part 1 from 3.

Meta’s Segment Anything Model (SAM 1) delivers a wide variety of predictsion, detections, and segmentations with a remarkable accuracy. Part 2 from 3.

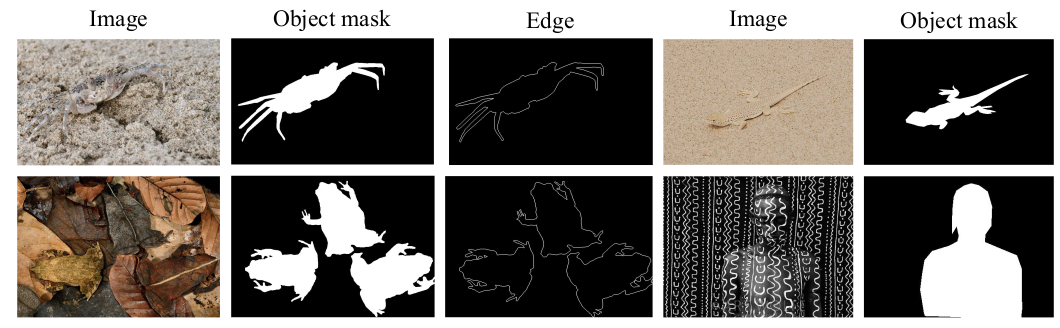

A brief technical comparison of the five most advanced camouflaged object detection methods in 2025, including ZoomNeXt, HGINet, RAG-SEG, MoQT, and SPEGNet, with detailed analysis of their architectures.

This post provides a minimal PyTorch implementation of Swin Transformer for a simple image classification.

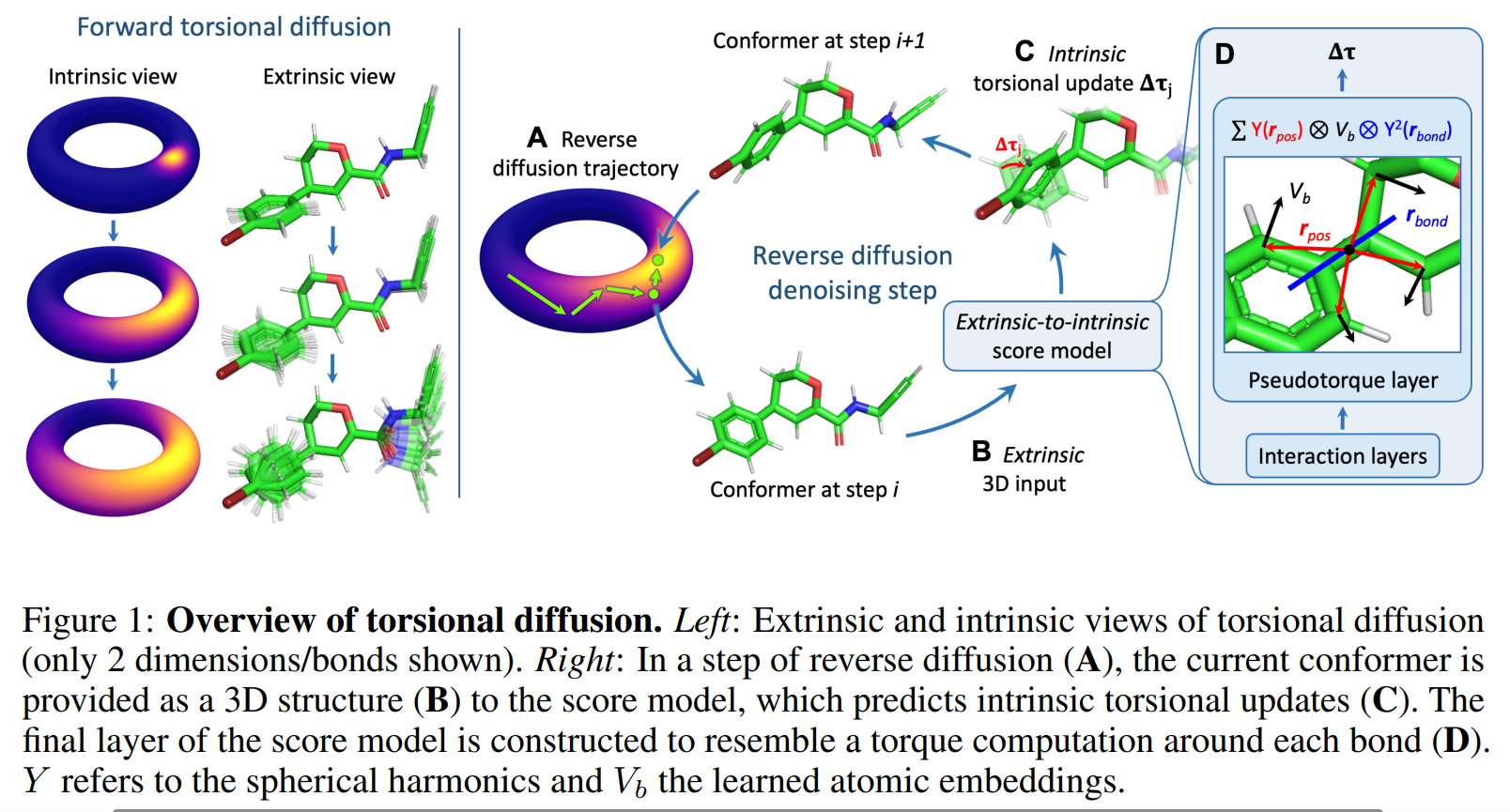

From prediction to creation (Subpart 2/3): Understanding diffusion models for molecular generation, with detailed implementation of torsional diffusion for 3D conformation generation.

From prediction to creation (Part 3/3): : how AI generates novel drug molecules optimized for multiple objectives using autoregressive transformer architectures.

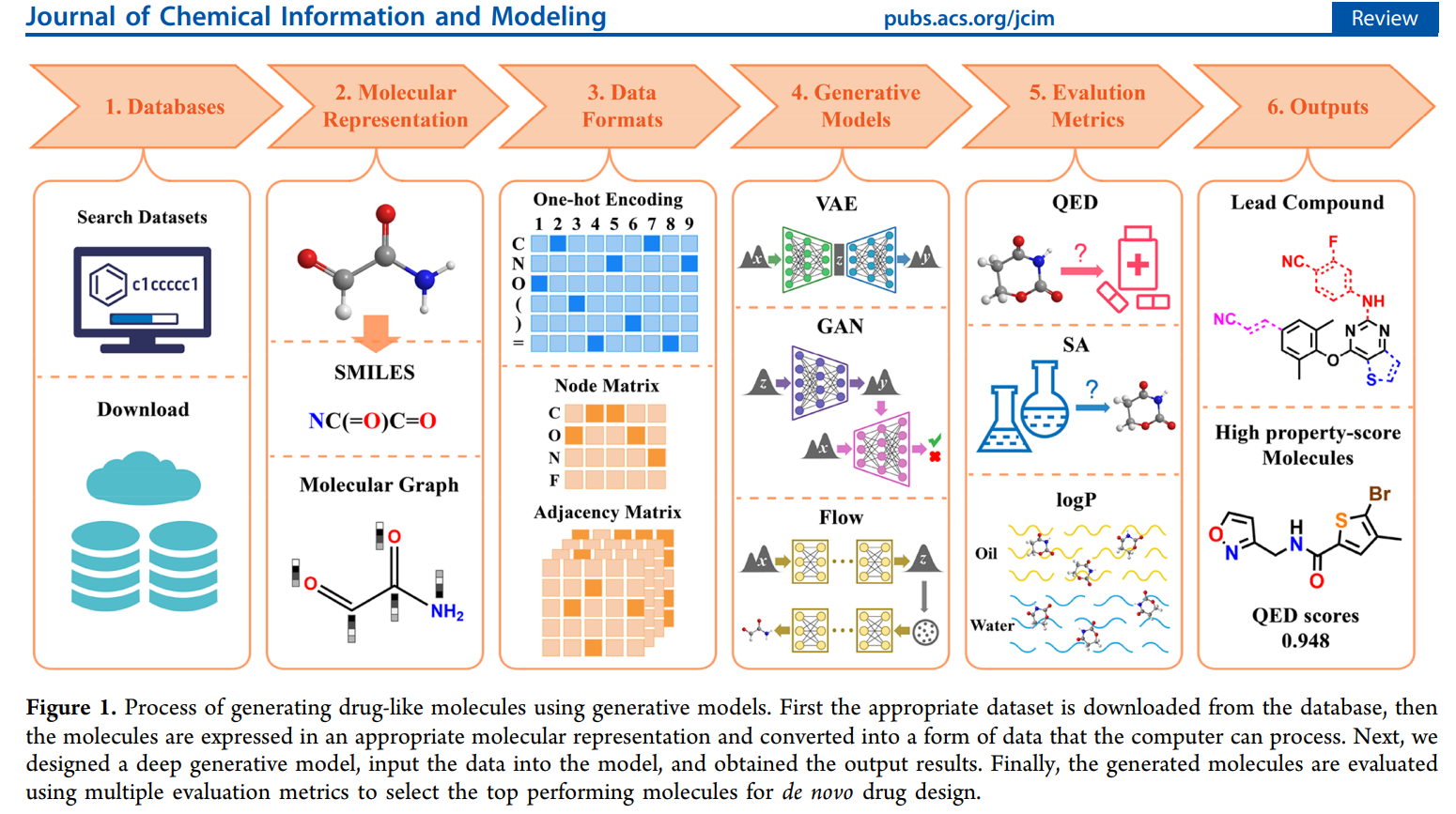

From prediction to creation (Subpart 1/3): A quick intro to how AI generates novel drug molecules optimized for multiple objectives using VAE and GAN model architectures.

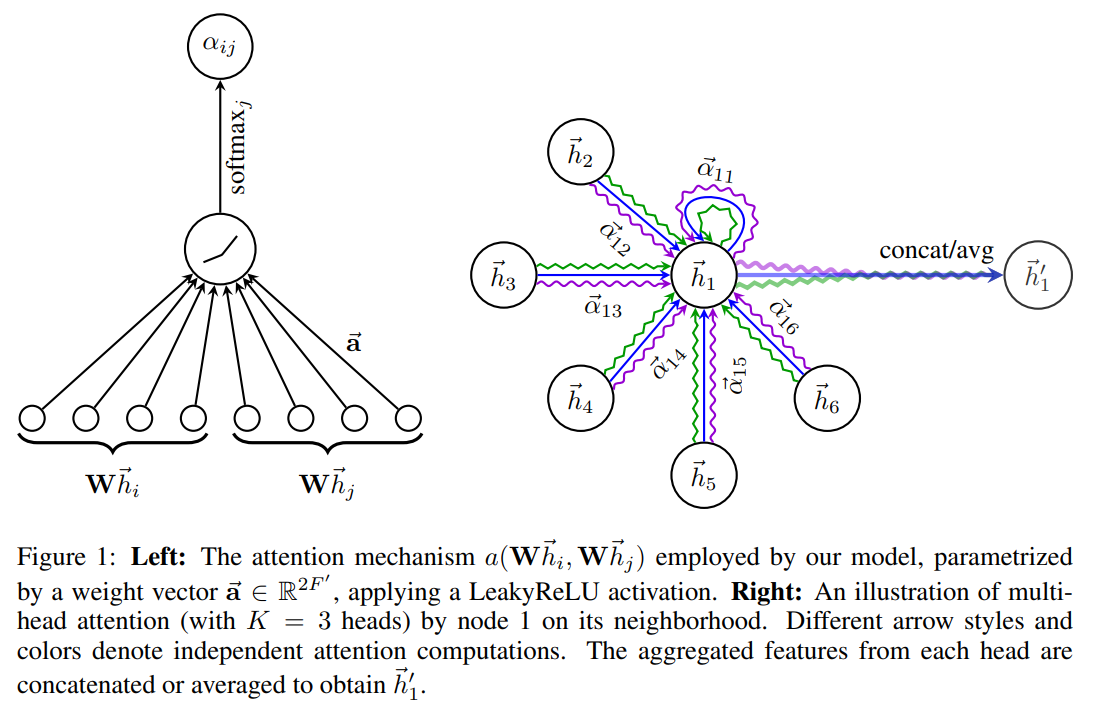

A technical deep-dive into Graph Neural Networks (GNNs) for predicting molecular properties. Learn how to construct molecular graphs, implement message passing architectures, and apply attention mechanisms to drug discovery tasks.

How DeepMind’s AlphaFold2 solved the 50-year grand challenge in biology – the protein folding problem – using transformers, evolutionary information, and geometric reasoning and what it means for drug discovery - Subpart 1/3

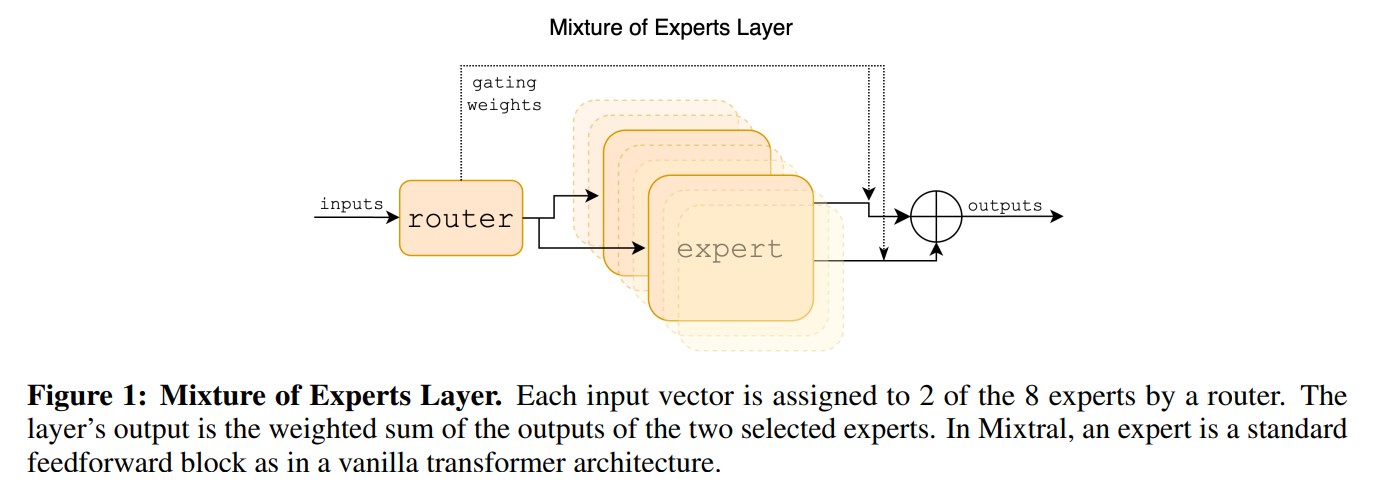

This blog post demystifies Mixture-of-Experts (MoE) layers, a key innovation for scaling Large Language Models efficiently. We’ll trace its origins, delve into the mathematical underpinnings, and build a foundational MoE block in PyTorch, mirroring the architecture from its initial conception.

This post details the machine learning strategy—including multi-task learning, transfer learning, and heatmap-based landmark detection—used to build an AI system that automates bone age assessment from X-ray images, achieving high accuracy with limited medical data.

Learn how KV-caching makes ChatGPT respond in seconds instead of minutes. This comprehensive guide explains the quadratic complexity problem in transformers, how caching Keys and Values solves it with 10-100x speedups, and the memory trade-offs - complete with full PyTorch implementations, benchmarks, and interactive visualizations.

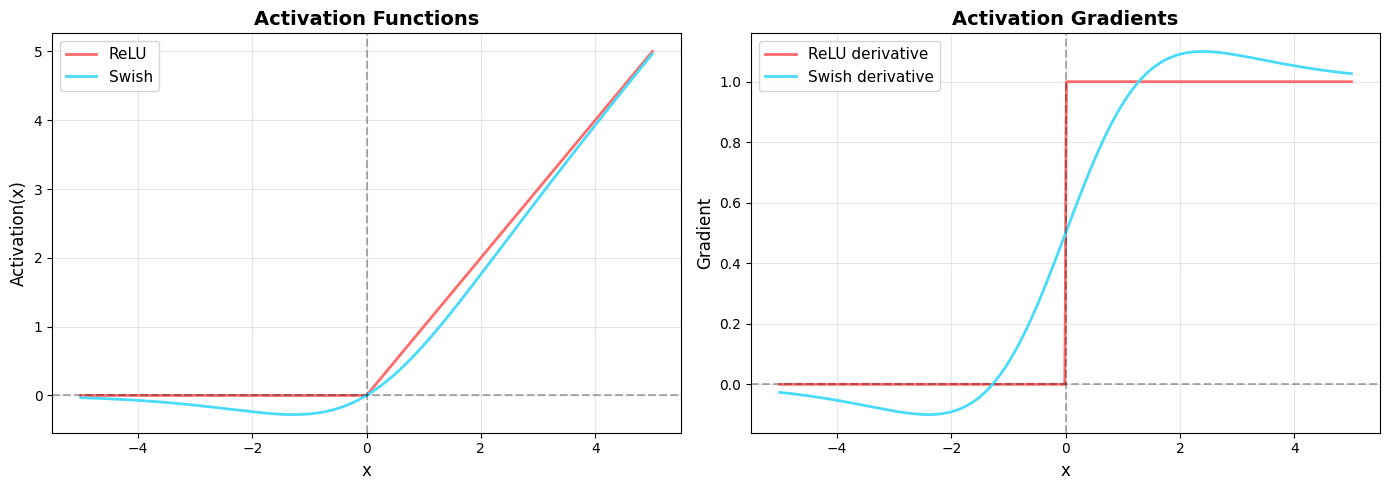

Discover why SwiGLU has replaced ReLU and GELU in modern transformers. This post explains the mathematical foundations, the evolution from sigmoid gates to Swish gates, and why this innovation delivers 5-8% performance gains - complete with Python implementations and interactive visualizations.

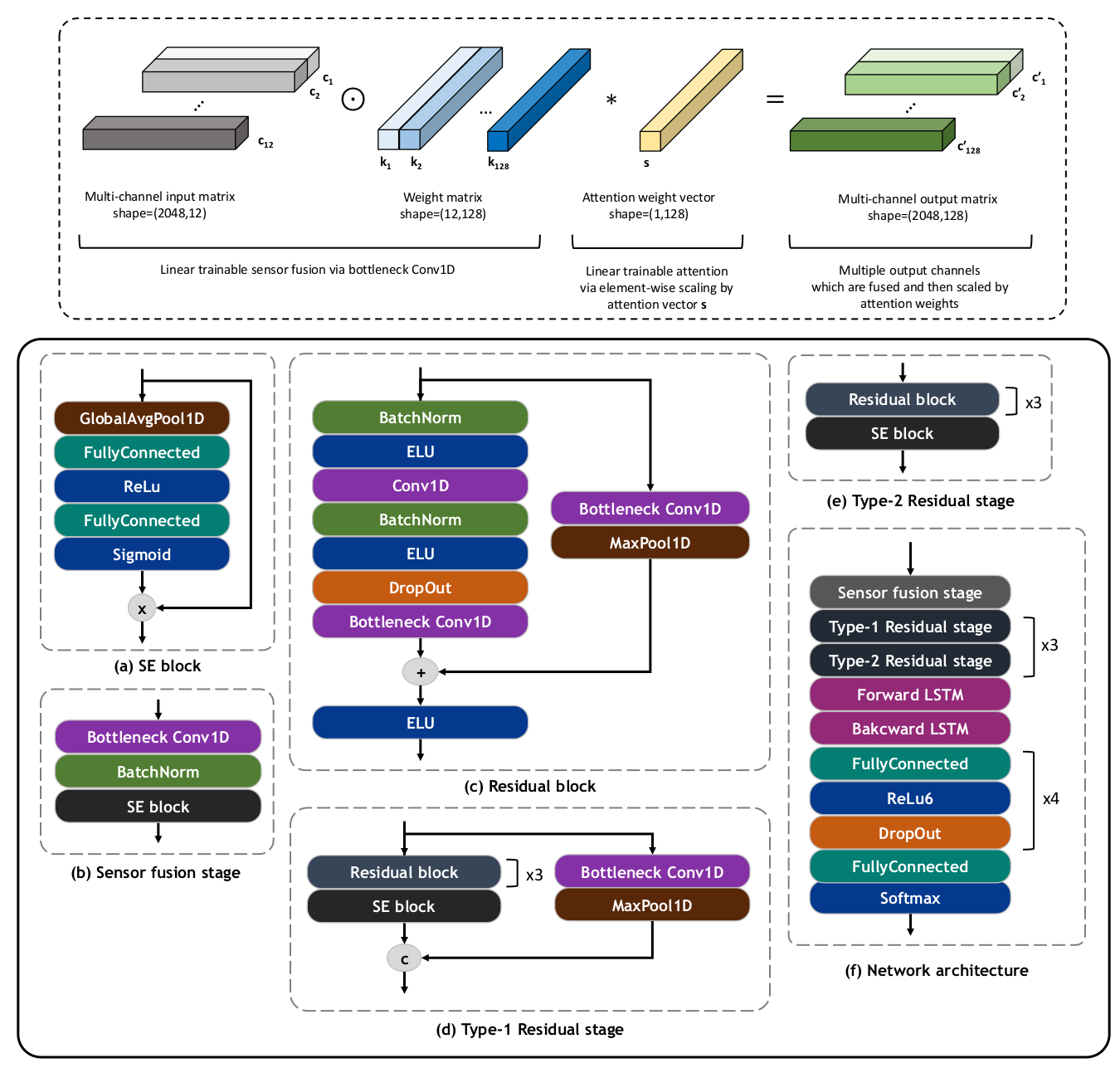

This paper presents a deep learning framework for detecting atrial fibrillation (AFib) by analyzing the heart’s mechanical functioning using smartphone mechanocardiography. The model achieves high accuracy in classifying sinus rhythm, AFib, and Noise categories.